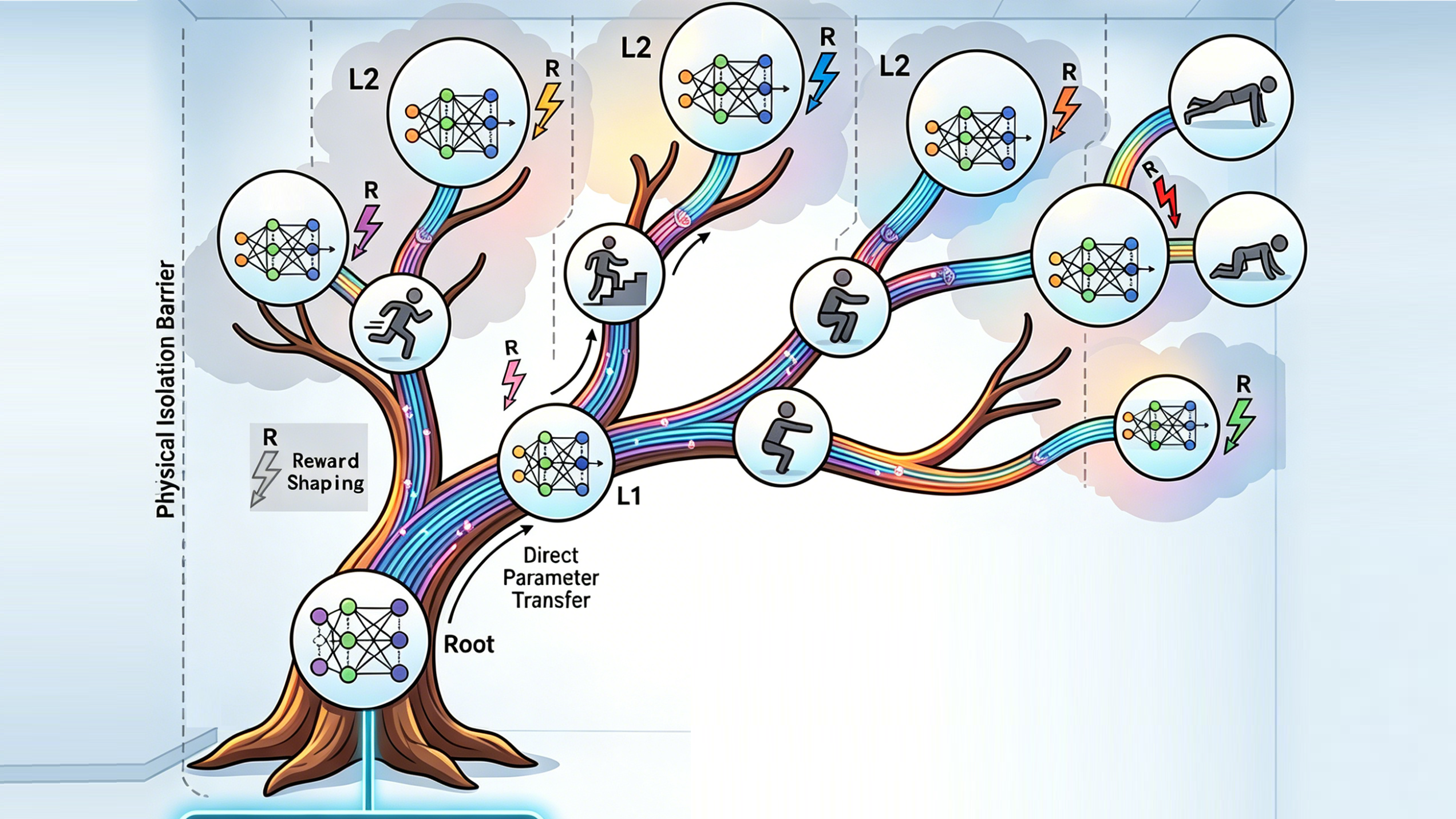

Tree Learning

A Multi-Skill Continual Learning Framework for Humanoid Robots

Video

Abstract

As reinforcement learning for humanoid robots evolves from single-task to multi-skill paradigms, efficiently expanding new skills while avoiding catastrophic forgetting has become a key challenge in embodied intelligence. Existing approaches either rely on complex topology adjustments in Mixture-of-Experts (MoE) models or require training extremely large-scale models, making lightweight deployment difficult. To address this, we propose Tree Learning, a multi-skill continual learning framework for humanoid robots. The framework adopts a root-branch hierarchical parameter inheritance mechanism, providing motion priors for branch skills through parameter reuse to fundamentally prevent catastrophic forgetting. A multi-modal feedforward adaptation mechanism combining phase modulation and interpolation is designed to support both periodic and aperiodic motions. A task-level reward shaping strategy is also proposed to accelerate skill convergence. Unity-based simulation experiments show that, in contrast to simultaneous multi-task training, Tree Learning achieves higher rewards across various representative locomotion skills while maintaining a 100% skill retention rate, enabling seamless multi-skill switching and real-time interactive control.

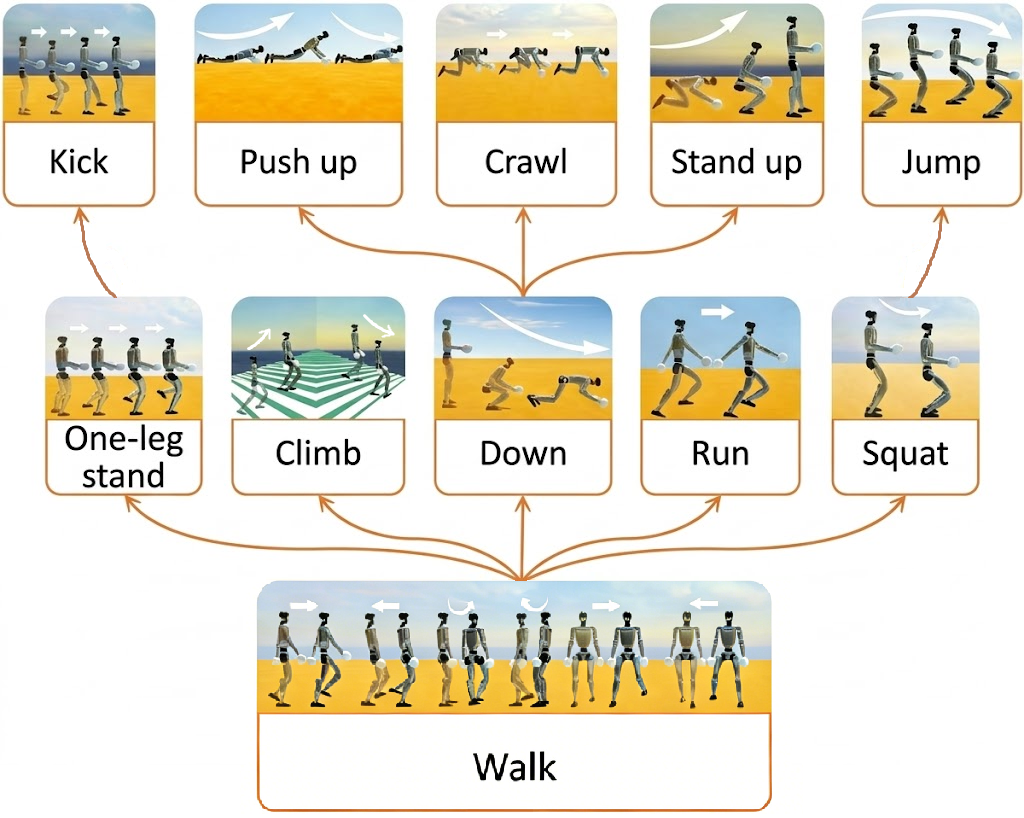

Tree Learning for Unitree G1

The framework is validated on the Unitree G1 robot in Unity simulation, learning 11 locomotion skills with full retention.

0 Walk

1.1 Run

1.2 Climb

1.3 Squat

1.4 Jump

1.5 One-Leg Stand

2.1 Kick

2.2 Down

2.3 Push Up

2.4 Crawl

2.5 Stand Up

Downstream Applications

We evaluate Tree Learning on two Unity tasks: a Super Mario-inspired interactive scenario and autonomous navigation in a classical Chinese garden.

Super Mario Game

Chinese Garden Navigation